If you'd like to learn more, please get in touch.

Context

As Solvento continued scaling its operations, the team began looking for ways to integrate AI into critical workflows without compromising trust or operational rigor. One process in particular stood out: the manual review of "proof of delivery" documents before a shipment could be approved and paid.

This step was essential, but time-consuming. It created operational bottlenecks, increased review costs, and extended payout times for users.

The opportunity was clear: replace a fully manual verification process with an AI-assisted one powered by an LLM, reducing waiting time while maintaining accountability.

Problem and opportunity

Through user interviews, we learned that reviewers needed clarity on what the system checked, Transparency around potential errors and a clear sense of responsibility in the final decision

An automated decision with no explanation would feel opaque and risky. At the same time, asking users to re-review everything manually would defeat the purpose of automation.

The opportunity was to design an AI-assisted experience that accelerated approvals while keeping humans meaningfully in control.

Solution

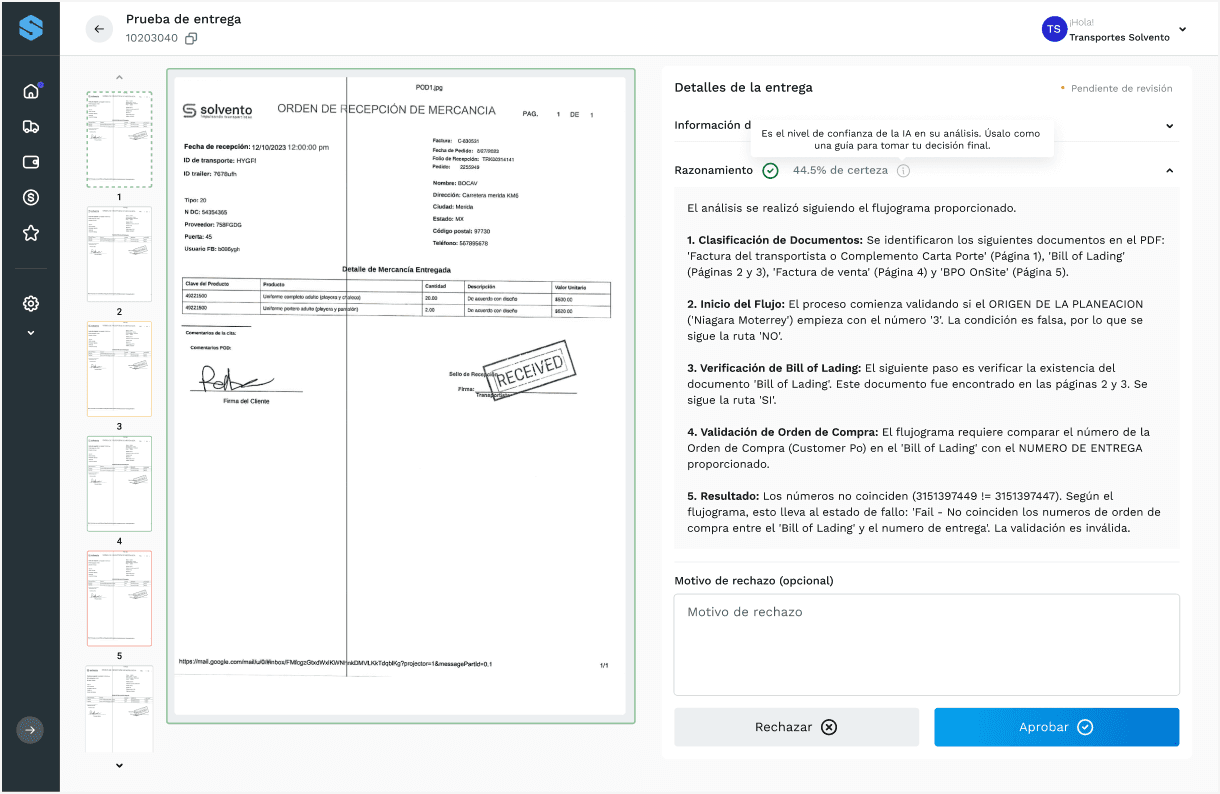

We introduced an LLM-powered review system that analyzes the uploaded proof of delivery and generates a structured evaluation before payment approval.

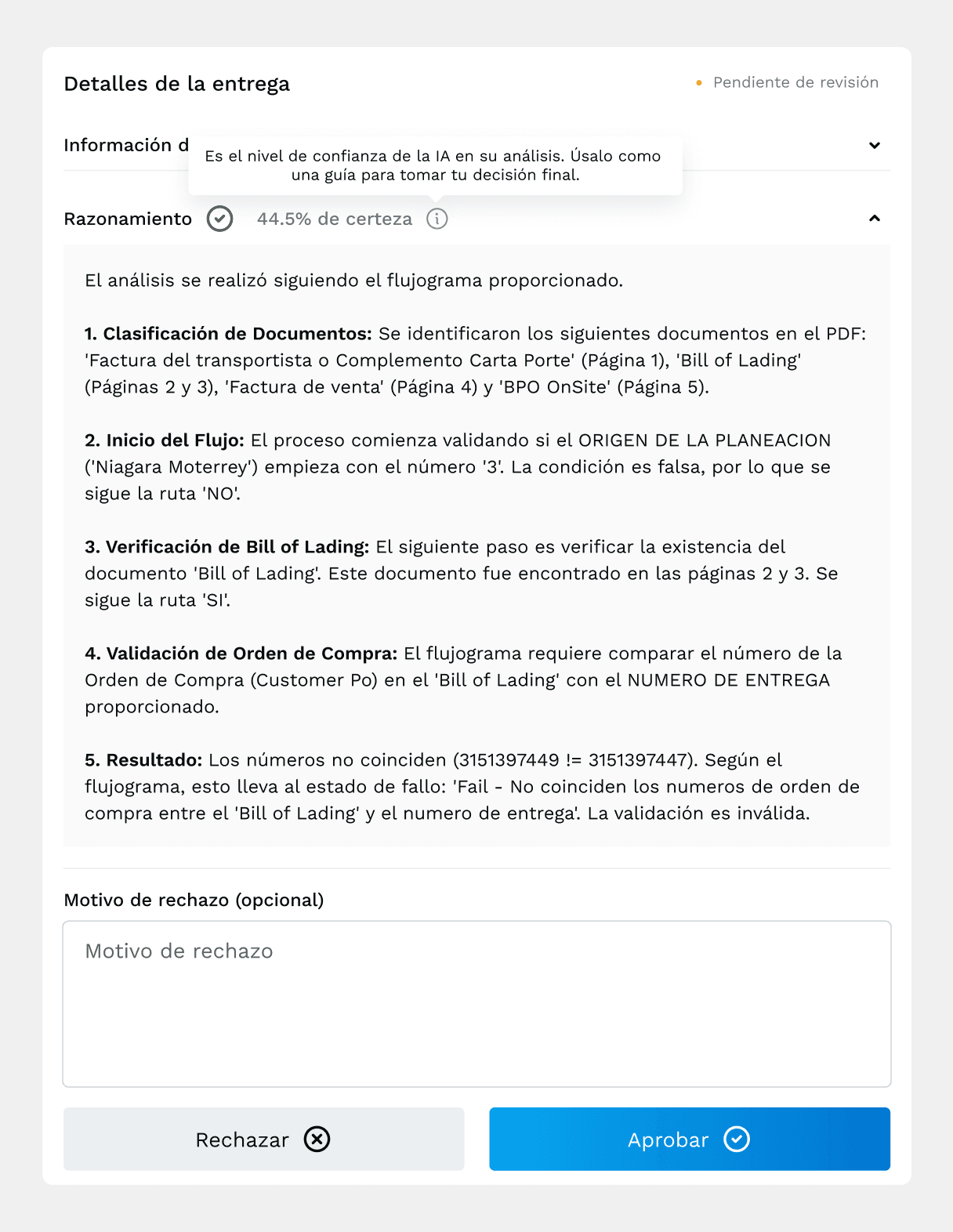

To address uncertainty, we made confidence visible.

Each review clearly displays the LLM’s confidence percentage, alongside a breakdown of the detected fields and potential inconsistencies that could trigger red flags. Instead of presenting a binary "approved / rejected" result, the system surfaces structured insights that support decision-making.

The user remains the final authority. They can approve or reject the delivery based on the AI’s analysis.

For cases requiring higher certainty, we also introduced an optional manual verification service, where a Solvento team member performs a traditional review. This allowed us to layer automation without eliminating human oversight.

If a proof of delivery is rejected, users can upload a corrected version and reinitiate the process seamlessly.

Details

Designing this feature required balancing efficiency with psychological safety.

The interface avoids overstating AI certainty. Confidence levels are presented clearly but neutrally, preventing false precision. Potential issues are highlighted contextually, guiding attention without overwhelming the user.

The experience was structured around two core principles: transparency and shared responsibility. Transparency meant clearly showing what the model evaluated. Shared responsibility ensured the human remained in the loop.

By framing the LLM as an assistant rather than an authority, we preserved trust while significantly accelerating the workflow.

The feature reduced approval times by 15%, directly improving payout velocity and operational efficiency — a meaningful win for both users and the business.